Meet the Censored: Ford Fischer

The videographer is this week's entrant in a growing club of the digitally deleted

Only a year ago, the idea of suspending Donald Trump from Twitter was a Democratic Party fantasy, with putative new Vice President Kamala Harris leading the charge.

During election week it became the norm, amid a sharp escalation of politicized deletions, suspensions, and lockouts by the tech platforms like Twitter and Facebook. Twitter essentially stuffed a pair of work gloves in Trump’s mouth in the days after Election Day, deleting over half of his tweets. Non-Trump social media mostly exulted:

Trump wasn’t the only one hit, nor was the phenomenon limited to conservatives like Dan Bongino, Maria Bartiromo, or Steve Bannon, who was banned for life after suggesting that Anthony Fauci and Christopher Wray’s heads be put “on pikes” at the “two corners of the White House” as a warning to federal bureaucrats. Even blue-party mouthpiece Neera Tanden, as perfect a representation of the political mainstream as draws breath, got slapped with a warning label.

While new platform-censorship controversies involving opposition movements in Nigeria, Vietnam, India, and other places made headlines abroad, Silicon Valley began using its content veto in the U.S. in new ways during election season. Moreover, in a recent Senate Commerce Committee hearing, the CEO of Google, Sundar Pichai, seemed to admit what’s been apparent for some time, that the World Socialist Web Site has taken an unnatural hit to its traffic. Here’s the key exchange with Utah Senator Mike Lee:

LEE: I’m just asking if you can name one high profile liberal person or company who you have censored…

PICHAI: We have had compliance issues with the World Socialist Review [sic], which is a left-leaning publication.

Videographer Ford Fischer is a frequent flyer when it comes to this issue. If you saw one of the more celebrated examples of liberal speech suppression this year — an incident in Tulsa, Oklahoma in which a woman was arrested and dragged out of a Trump rally for wearing an “I Can’t Breathe” t-shirt — you were probably looking at film by Fischer, one of the country’s leading shooters of protest video:

Fischer’s name first popped on the content moderation front about thirty moral manias ago, in the summer of 2018. Shortly after Alex Jones was removed to the cheers of nearly everyone outside the political far right, hundreds of lesser-known independent outlets were either put out of business or forced to take hits to their financial bottom lines.

This was when politicians and pundits were in a fever to stop “Russian interference.” Ironically, they were less concerned with police brutality. Sites like Cop Block, Filming Cops, and Police the Police were among the most prominent removals in an October, 2018 Facebook “purge” of sites deemed “coordinated inauthentic activity.”

Fischer was among the more prominent independent journalists hit in a later round of “content moderation.” His site News2Share was demonetized by YouTube on June 5, 2019.

Unlike other targeted sites, whose business models relied upon aggregated content, News2Share was built around original footage, often shot by Fischer himself in the middle of heated situations. In other words, he was a producer of the increasingly rare commodity called original reporting. He covered public political demonstrations across the spectrum, from a pro-Assange protest in London to a blue-friendly protest of the “Barr Coverup” after the release of the Mueller report, to a square-off between Antifa and gun activists in Ohio.

The videos that got Fischer in trouble in 2019 included ones titled, “Holocaust denier Chris Dorsey confronted at AIPAC” and “Mike Enoch denounces ‘systematic elimination of white people.’” YouTube removed both, citing company policy against “content glorifying or inciting violence against another person or group of people,” or “content that encourages hatred of another person or group of people based upon their membership in a protected group.”

YouTube’s algorithm-based filtration appeared unaware that the same Fischer footage of Enoch that the company was claiming “encouraged hatred” had been used by PBS (who credited Fischer) for a documentary about Charlottesville that was introduced by Martin Luther King III at a film festival.

Fischer footage of Richard Spencer from that same event ended up in an Emmy-winning documentary called “White Right: Meeting the Enemy,” and still more footage of his ended up in a New York Times video called “How an Alt-Right Leader Climbed the Ranks” — none of which, obviously, triggered content warnings.

The Tulsa incident laid bare the obvious new skewing of the media’s financial landscape. Ford made some money covering the arrest of the woman in the “I Can’t Breathe” shirt, including by licensing to ABC, but YouTube demonetized the video on his channel, on the grounds that it depicted “severe real injury” or other types of prohibited violence.

However, almost exactly similar footage of the same scene shot by MSNBC is monetized on YouTube (Fischer even saw it used to air a Trump ad). Similarly, footage he took of Confederate general Albert Pike’s statue being toppled was also demonetized, but when more “reputable” news organizations put up their own almost exactly similar videos, ads ran freely (an ad for “Wix.com” ran on the Telegraph’s version, for instance).

In the last month leading up to the election, Fischer had three more incidents.

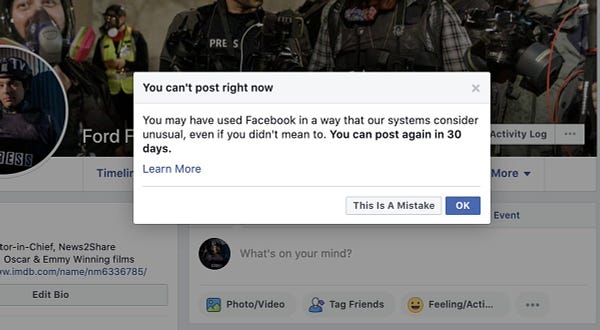

On September 5, his Facebook account was banned, then restored the next day after a viral Tweet thread.

On November 1st, he was subject to a "complex entities interaction" ban after showing film of police arresting protesters. This prevented him from posting to groups.

Finally, on the morning after the election, he was suspended for 30 days for posting images of anti-Trump protests in an “unusual” way. Facebook this time reversed their decision within an hour, but he’ll likely keep being zapped as content-policing becomes more aggressive.

The Fischer case is interesting because it exposes the challenges with trying to regulate hate speech, political extremism, even threats. What’s the line between reporting on extremism, and promoting it?

Fischer detractors often hint at bias in his coverage — the usual accusation involves the fact that he once interned at Reason magazine — but this moderation system has a built-in legitimacy problem if the problem isn’t the content itself, but who’s distributing it. I asked Ford about these and other questions:

Q. Would it be correct to say you film protests and marches of groups across the spectrum, as a business strategy? Is this your job, or a political calling?

I try to film mostly political activism across the political spectrum both as a business strategy and a calling. In an era where cable news is stuffed with commentators and panelists talking about those affecting change in our country, I'd like people to see raw video and livestream that gives them an idea of what activists are calling for — and how they're doing so — unfiltered. I think it's a good business strategy that attracts people looking for more on-the-ground coverage. It also gives me a complete library of works for documentaries and news outlets to license from.

The calling aspect of it for me is that I truly believe the cable news media leaves people incompletely informed and increasingly divided with the style they use to report these issues. I believe my format, a sort of C-SPAN-and-summary library of activism, can leave people with a greater understanding of those advocating for issues in this country, whether they agree with those people or not.

I'd add another primary purpose of my work is recording police response to activism. Use of force, justifications for arrest, etc. These things are important not only for informing the public, but accountability in the courts. I generally provide my raw video to defendants in criminal cases and civilian parties to lawsuits.

Q. Have you been blocked for displaying the same footage more “reputable” outlets have shown?

I've absolutely encountered censorship, age-restriction, and demonetization on Facebook and Youtube for filming the same incidents [as] mainstream outlets that face no similar outcome. My livestream of Unite the Right 2 was removed from Facebook in real-time. CSPAN had been streaming the same speeches from right next to me.

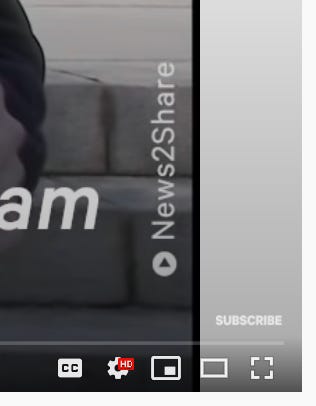

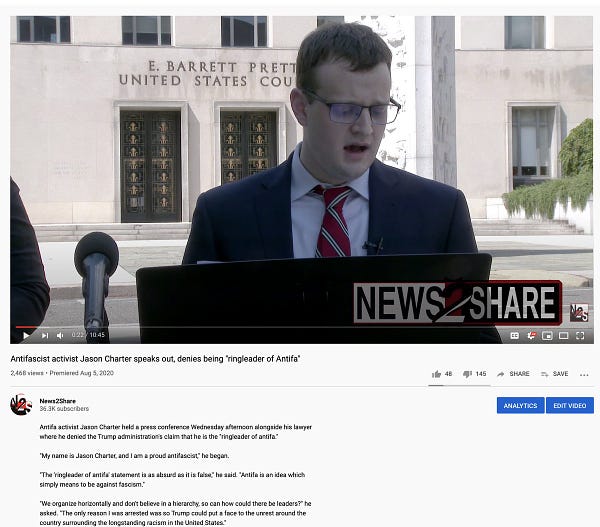

A couple of Youtube examples: I filmed an Antifa activist give a press conference with his lawyer. Literally just talking. With a lawyer. Youtube slapped it with hate speech and graphic violence labels:

But when I licensed the same presser to NowThis, their version of my exact video was monetized without issue. Youtube said they wouldn't overturn it because I exhausted an appeal, and the appeal review is final, but they were just wrong. As another example, I hired a freelancer to film a civil disobedience rally. No violence, people being arrested for blocking a road. Youtube called it "graphic violence" on my channel, But on Ruptly and Bloomberg, their videos (not the same footage, but exactly the same incident filmed by whoever they had as a source) were monetized.

Q. Is the problem that platforms don’t distinguish between covering/filming extremist groups and actually propagandizing them? Or is there no difference?

I actually think there are a few issues at play. Youtube and Facebook seem to be relying increasingly on robots to make their decisions, and I think automated decision-making fails to understand the difference between content promoting groups and content covering them. Again, to this day a link to my work on the Southern Poverty Law Center website is dead because Facebook killed it.

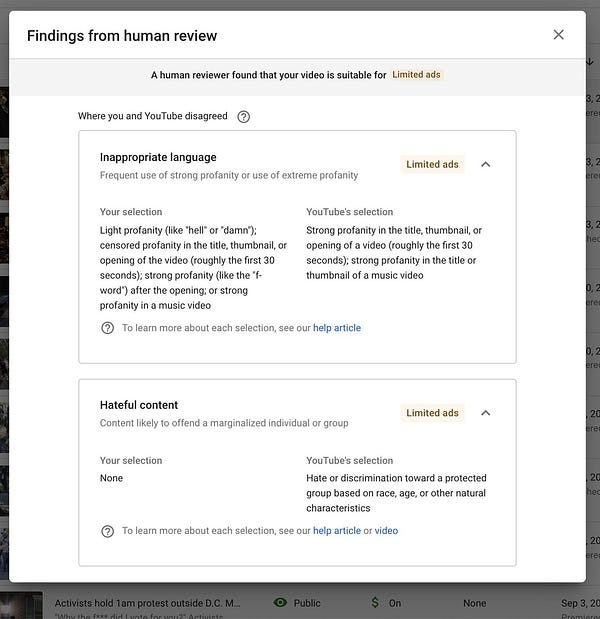

But I also think part of the issue is that supposed human reviewers are just not actually looking when they make decisions. Youtube claims their ratings about violence, language, and hate content are based on human review, following appeals of what they admit are automated demonetizations. For me, most of the reviewers just get it absolutely wrong. Protests against cops are labeled as hate speech.

Non-violent civil disobedience is labeled as graphic violence. I could give hundreds of examples of this. My impression is that somewhere, some moderator is supposed to be watching videos and making decisions, but instead, they rush through and take wild guesses.

Q. How much has platform censorship has cost you?

I hesitate to put a dollar amount on what censorship has cost me publicly, but I think there are a few angles to approach the question. I tend to think of my Patreon and my ad revenue as my budget for hiring people and filming what I cover. Demonetization has cost me roughly half of that overtime directly (and all of it from YouTube during the 7-month window). But additionally, YouTube and Facebook have both prided themselves on efforts to algorithmically push viewers toward "reputable sources."

My source of profit, and one of the primary purposes of my work, is licensing to documentaries and news programs. I think it is almost immeasurable to know how much YouTube and Facebook's shadowy classification system has cost me in potential filmmakers and news outlets that didn't find my footage, because other outlets with similar content - such as AP, Reuters, and CSPAN - came up ahead of mine algorithmically.

Q: Is this problem getting worse or better?

This problem is undoubtedly getting worse... I think that for me and a lot of my friends, 2018 felt like the beginning of the slippery slope, and it's gotten exponentially worse across platforms since then. While I am active on Twitter, I will say that I have not encountered any similar issues there myself. I'd really love a goddamned verification checkmark there, though.

I'm curious if there are some under-the-table agreements in place, so that these social media giants give favor to news media giants by subduing independent entities that might compete with them.

Matt, can I offer myself up as a Censored person?

I’ve been chronicling my experiences of being maligned in my local paper and my efforts to get the story corrected... this eventually turned into an investigation on my part which found that my local paper is owned by a corporate conglomerate that owns 350+ publications and none of them appear to have a Correction Policy or a Code of Ethics.

Here’s my first story:

How My Local Paper Destroyed My Life and My Mental Health

https://link.medium.com/Jy6PZIYNxbb

...which led to my 2nd story:

An Up-Close Look At Journalistic Rot In Action: See a series of unbelievable emails with newspaper executives and what they reveal

https://link.medium.com/ZgDYLGaOxbb

...which led to my latest story in this saga:

Meet The “Sinclair Broadcast Group” of Local Newspapers: Lee Enterprises is one of the largest owners of local newspapers in America. Their apparent indifference to the truth, ethics, and transparency, is terrifying.

https://link.medium.com/mzOOT3dOxbb