Alternatives to Censorship: Interview With Matt Stoller

As Congress once again demands that Silicon Valley crack down on speech, the Director of Research at the American Economic Liberties Project outlines the real problem, and better solutions

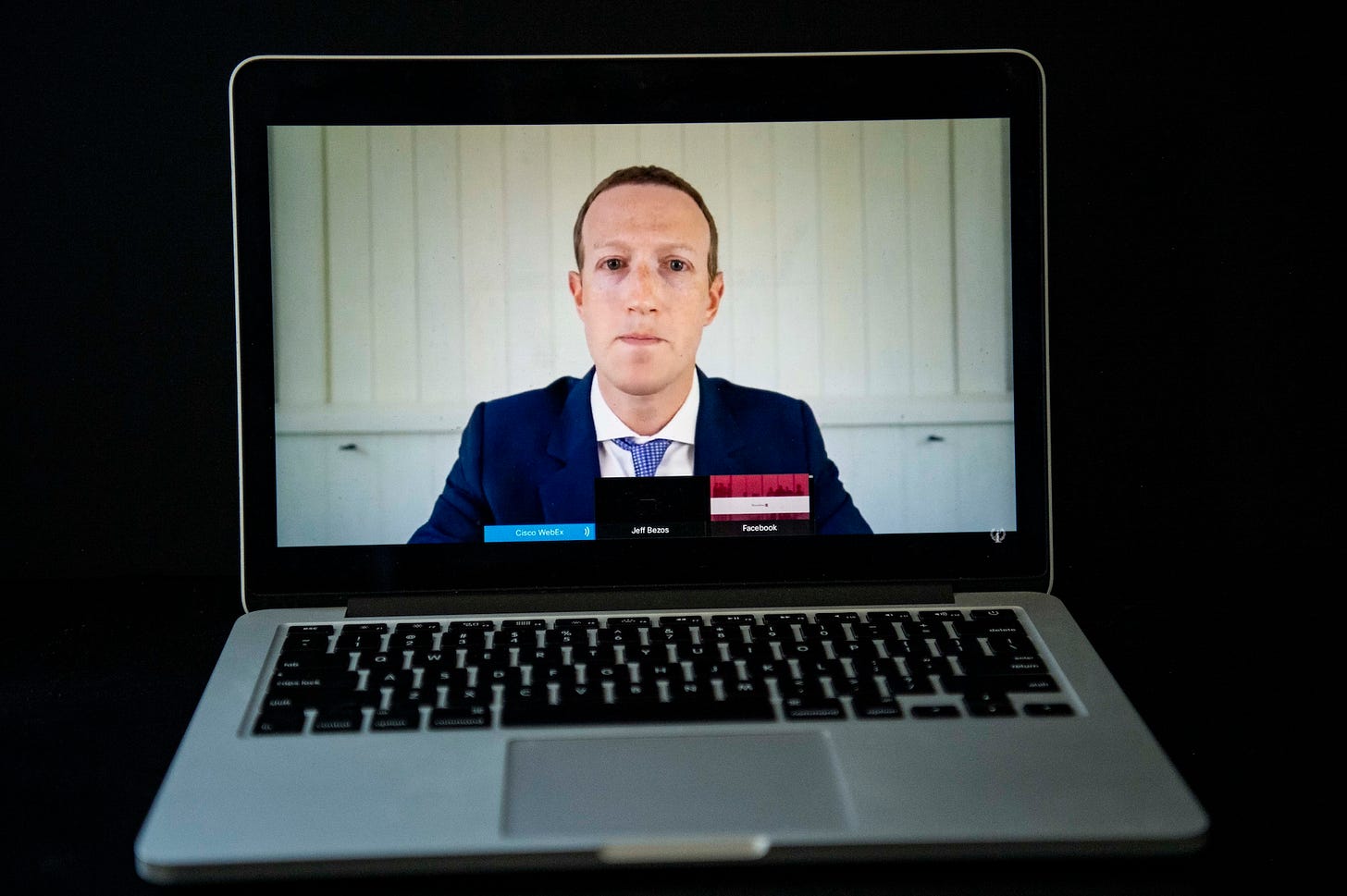

Led by Chairman Frank Pallone, the House Energy and Commerce Committee Thursday held a five-hour interrogation of Silicon Valley CEOs entitled, “Disinformation Nation: Social Media's Role in Promoting Extremism and Misinformation.”

As Glenn Greenwald wrote yesterday, the hearing was at once agonizingly boring and frightening to speech advocates, filled with scenes of members of Congress demanding that monopolist companies engage in draconian crackdowns.

Again, as Greenwald pointed out, one of the craziest exchanges involved Texas Democrat Lizzie Fletcher:

Fletcher brought up the State Department’s maintenance of a list of Foreign Terrorist Organizations. She praised the CEOs of Twitter, Facebook, and Google, saying that “by all accounts, your platforms do a better job with terrorist organizations, where that post is automatically removed with keywords or phrases and those are designated by the state department.”

Then she went further, chiding the firms for not doing the same domestically. asking, “Would a federal standard for defining a domestic terror organization similar to [Foreign Terrorist Organizations] help your platforms better track and remove harmful content?”

At another point, Fletcher noted that material from the January 6th protests had been taken down (for TK interviews of several of the videographers affected, click here) and said, “I think we can all understand some of the reasons for this.” Then she complained about a lack of transparency, asking the members, “Will you commit to sharing the removed content with Congress?” so that they can continue their “investigation” of the incident.

Questions like Fletcher’s suggest Congress wants to create a multi-tiered informational system, one in which “data transparency” means sharing content with Congress, but not the public.

Worse, they’re seeking systems of “responsible” curation that might mean private companies like Google enforcing government-created lists of bannable domestic organizations, which is pretty much the opposite of what the First Amendment intended.

Under the system favored by Fletcher and others, these monopolistic firms would target speakers as well as speech, a major departure from our current legal framework, which focuses on speech connected to provable harm.

As detailed in an earlier article about NEC appointee Timothy Wu, these solutions presuppose that the media landscape will remain highly concentrated, the power of these firms just deployed in a direction more to the liking of House members like Fletcher, Pallone, Minnesota’s Angie Craig, and New York’s Alexandria Ocasio-Cortez, as well as Senators like Ed Markey of Massachusetts.

Remember this quote from Markey: “The issue isn’t that the companies before us today are taking too many posts down. The issue is that they’re leaving too many dangerous posts up.”

These ideas are infected by the same fundamental reasoning error that drove the Hill’s previous drive for tech censorship in the Russian misinformation panic. Do countries like Russia (and Saudi Arabia, Israel, the United Arab Emirates, China, Venezuela, and others) promote division, misinformation, and the dreaded “societal discord” in the United State? Sure. Of course.

But the sum total of the divisive efforts of those other countries makes up at most a tiny fraction of the divisive content we ourselves produce in the United States, as an intentional component of our commercial media system, which uses advanced analytics and engagement strategies to get us upset with each other.

As Matt Stoller, Director of Research at the American Economic Liberties Project puts it, describing how companies like Facebook make money:

It's like if you were in a bar and there was a guy in the corner that was constantly egging people onto getting into fights, and he got paid whenever somebody got into a fight? That's the business model here.

As Stoller points out in a recent interview with Useful Idiots, the calls for Silicon Valley to crack down on “misinformation” and “extremism” is rooted in a basic misunderstanding of how these firms make money. Even as a cynical or draconian method for clamping down on speech, getting Facebook or Google to eliminate lists of taboo speakers wouldn’t work, because it wouldn’t change the core function of these companies: selling ads through surveillance-based herding of users into silos of sensational content.

These utility-like firms take in data from everything you do on the Web, whether you’re on their sites or not, and use that information to create a methodology that allows a vendor to buy the most effective possible ad, in the cheapest possible location. If Joe Schmo Motors wants to sell you a car, it can either pay premium prices to advertise in a place like Car and Driver, or it can go to Facebook and Google, who will match that car dealership to a list of men aged 55 and up who looked at an ad for a car in the last week, and target them at some other, cheaper site.

In this system, bogus news “content” has the same role as porn or cat videos — it’s a cheap method of sucking in a predictable group of users and keeping them engaged long enough to see an ad. The salient issue with conspiracy theories or content that inspires “societal discord” isn’t that they achieve a political end, it’s that they’re effective as attention-grabbing devices.

The companies’ use of these ad methods undermines factuality and journalism in multiple ways. One, as Stoller points out, is that the firms are literally “stealing” from legitimate news organizations. “What Google and Facebook are doing is they're getting the proprietary subscriber and reader information from the New York Times and Wall Street Journal, and then they're advertising to them on other properties.”

As he points out, if a company did this through physical means — breaking into offices, taking subscriber lists, and targeting the names for ads — “We would all be like, ‘Wow! That's outrageous. That's crazy. That's stealing.’” But it’s what they do.

Secondly, the companies’ model depends upon keeping attention siloed. If users are regularly exposed to different points of view, if they develop healthy habits for weighing fact versus fiction, they will be tougher targets for engagement.

So the system of push notifications and surveillance-inspired news feeds stresses feeding users content that’s in the middle of the middle of their historical areas of interest: the more efficient the firms are in delivering content that aligns with your opinions, the better their chance at keeping you engaged.

Rope people in, show them ads in spaces that in a vacuum are cheap but which Facebook or Google can sell at a premium because of the intel they have, and you can turn anything from QAnon to Pizzagate into cash machines.

After the January 6th riots, Stoller’s organization wrote a piece called, “How To Prevent the Next Social Media-Driven Attack On Democracy—and Avoid a Big Tech Censorship Regime” that said:

While the world is a better place without Donald Trump’s Twitter feed or Facebook page inciting his followers to violently overturn an election, keeping him or other arbitrarily chosen malignant actors off these platforms doesn’t change the incentive for Facebook or other social networks to continue pumping misinformation into users’ feeds to continue profiting off of ads.

In other words, until you deal with the underlying profit model, no amount of censoring will change a thing. Pallone hinted that he understood this a little on Thursday, when he asked Zuckerberg if it were true, as the Wall Street Journal reported last year, that in an analysis done in Germany, researchers found that “Facebook’s own engagement tools were tied to a significant rise in membership in extremist organizations.” But most of the questions went in the other direction.

“The question isn't whether Alex Jones should have a platform,” Stoller explains. “The question is, should YouTube have recommended Alex Jones 15 billion times through its algorithms so that YouTube could make money selling ads?”

Below is an excerpted transcript from the Stoller interview at Useful Idiots, part of which is already up here.

This is an excerpt from today’s subscriber-only post. To read the entire article and get full access to the archives, you can subscribe for $5 a month or $50 a year.